Large Language Model (LLM) Development Malta

LLM development and fine-tuning services in Malta. Custom large language models, RAG implementations, and domain-specific AI for enterprise applications.

Schedule a Consultation →Trusted By Leading Organisations

Large language models have transformed what is possible with AI, but unlocking their full potential for business applications requires expertise beyond basic API calls. Neural AI in Malta specialises in LLM development, fine-tuning, and deployment that turns powerful foundation models into reliable business tools. Our approach goes beyond generic implementations to deliver domain-specific language intelligence that understands your industry, your data, and your operational context.

Our Approach to LLM Development

Our LLM development services span the full lifecycle from model selection through production optimisation. Through our AI consulting process, we evaluate your specific requirements to determine the right approach. Not every use case requires fine-tuning; sometimes well-engineered RAG architectures deliver superior results at lower cost. We work with leading models including GPT-4, Claude, Llama, and Mistral, recommending the optimal fit based on your accuracy requirements, latency constraints, privacy needs, and budget. For Malta enterprises in regulated industries, we specialise in deployment architectures that keep sensitive data within your infrastructure while delivering the language intelligence your applications need. Our AI integration expertise ensures LLM capabilities connect seamlessly with your existing systems.

LLM Applications Across Malta’s Economy

LLM development delivers transformative capabilities across Malta’s industries. In the iGaming sector, custom LLMs power player support chatbots, generate compliance documentation, and analyse player communication patterns for responsible gaming monitoring. Financial services organisations deploy fine-tuned LLMs for regulatory report generation, risk analysis narratives, and customer communication automation. Legal firms leverage RAG-powered LLMs for case law research, contract analysis, and legal document drafting with accurate citations. Government agencies implement LLMs for citizen service chatbots, policy document summarisation, and multilingual communication. Healthcare providers use LLMs for clinical documentation, medical literature analysis, and patient communication.

Transform Your Business with Custom AI Solutions

Neural AI's large language model (llm) development solutions streamline processes and automate tasks, delivering measurable ROI for organisations in Malta and beyond. Let's discuss your project.

Schedule a Consultation →Cost Reduction

Availability

Response Time

Scale Capacity

Industry Applications

See how this solution transforms operations across different sectors.

- • Build domain-specific LLMs that understand gaming terminology, regulatory requirements, and player communication nuances

- • Our iGaming LLM solutions power player support chatbots, compliance documentation generation, and responsible gaming content systems for Malta-licensed operators

- • Deploy fine-tuned LLMs for regulatory report generation, risk analysis narrative creation, customer communication automation, and compliance document review

- • Our financial LLMs understand Malta's regulatory framework and produce outputs that meet supervisory standards

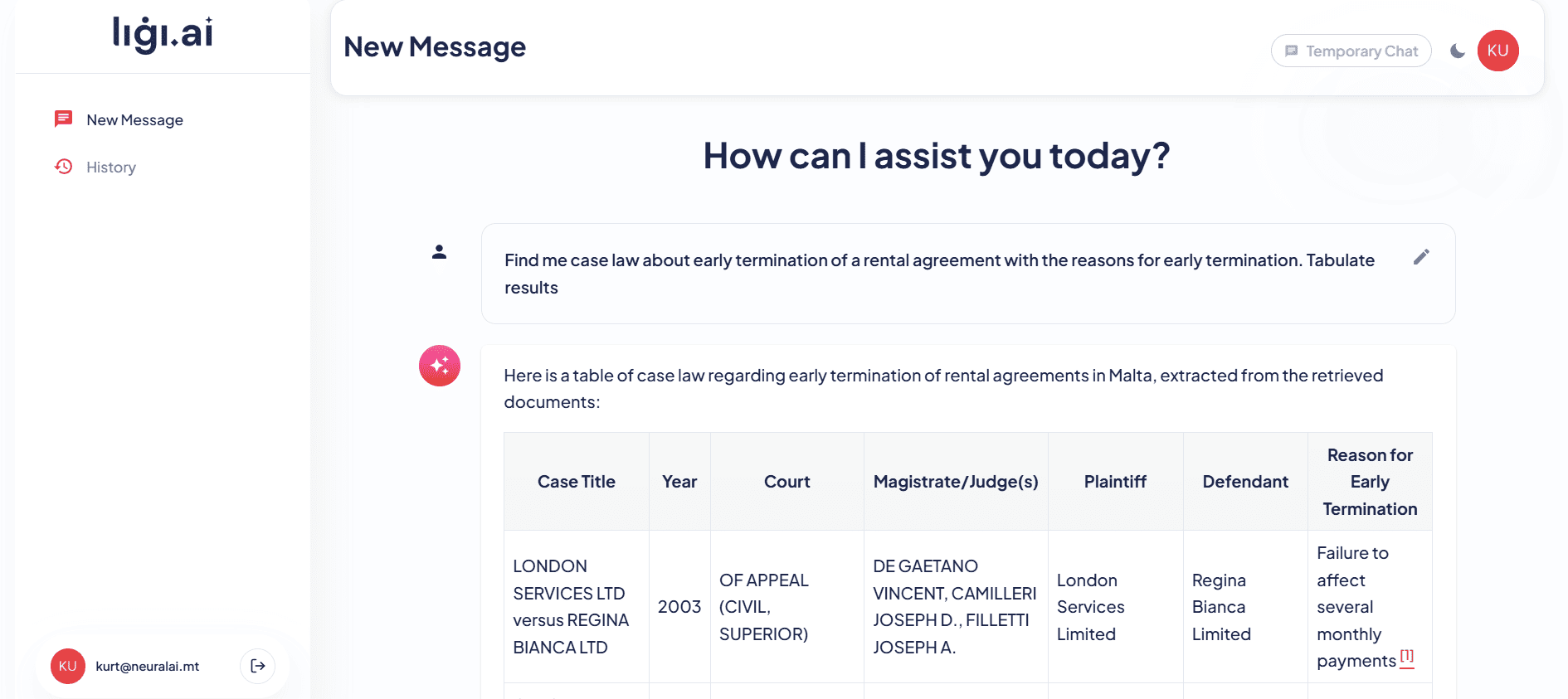

- • Implement LLM solutions for legal research, contract analysis, case summarisation, and document drafting

- • RAG architectures connect LLMs to case law databases and legislative collections, providing legally grounded responses with full citation tracking for Malta law firms

- • Develop LLM applications for clinical documentation, medical literature analysis, patient communication, and health information delivery

- • Our healthcare LLMs maintain clinical accuracy and appropriate language while complying with data protection requirements

- • Citizen service chatbots that streamline permit applications, answer public queries, and provide government information in both Maltese and English

- • Automate compliance checks and suspicious activity reporting with intelligent chatbot systems that guide users through regulatory processes

- • Property inquiry chatbots that handle tenant questions, schedule viewings, and provide instant property information 24/7

- • Guest service chatbots for booking management, concierge services, and multilingual tourist information delivery

- • Customer support and product recommendation chatbots that drive conversions and handle order inquiries automatically

- • Student support chatbots providing course information, enrollment guidance, and administrative assistance around the clock

- • Automated customer service chatbots handling account inquiries, plan changes, and technical troubleshooting

- • Internal knowledge base chatbots for maintenance procedures, safety protocols, and inventory management queries

- • Claims intake chatbots that guide policyholders through the claims process and answer coverage questions instantly

- • Client-facing chatbots that answer project queries, schedule consultations, and provide design process updates

- • Cost-effective chatbot solutions that give startups enterprise-level customer support without large support teams

- • Shipment tracking and delivery status chatbots integrated with logistics management systems

- • IT helpdesk chatbots automating ticket creation, password resets, and common troubleshooting procedures

Key Features

Custom LLM Fine-Tuning

Fine-tune foundation models like GPT-4, Claude, Llama, and Mistral on your proprietary data to create domain-specific language models that understand your industry terminology, business context, and regulatory requirements. Our fine-tuning methodology maximises performance while minimising training data requirements and costs.

RAG Architecture Design

Retrieval-Augmented Generation systems that ground LLM responses in your actual documents, databases, and knowledge bases. Our RAG pipelines eliminate hallucinations and ensure factual accuracy by retrieving relevant source material before generating responses, with full citation tracking.

Prompt Engineering & Optimisation

Systematic prompt engineering that maximises model performance for your specific use cases. We develop tested prompt libraries, implement version-controlled prompt management systems, and create evaluation frameworks that ensure consistent, high-quality outputs across all your LLM applications.

Model Evaluation & Benchmarking

Rigorous evaluation frameworks that measure accuracy, relevance, safety, latency, and cost-efficiency across different models and configurations. Our benchmarking ensures you deploy the right LLM for each use case, balancing performance with operational costs.

Benefits

Discover how our large language model (llm) development services deliver measurable results for your organisation.

01 Domain-Specific Intelligence

Generic LLMs lack industry knowledge. Fine-tuned models understand Malta-specific regulations, industry jargon, local context, and business processes, delivering far more relevant and accurate outputs than off-the-shelf models for your particular domain.

02 Reduced Hallucination Risk by 90%+

RAG architectures and fine-tuning dramatically reduce fabricated responses by grounding model outputs in verified source material. Critical for professional and regulated applications where accuracy is non-negotiable and incorrect information creates liability.

03 Cost-Optimised Inference Saving 50-80%

Right-sizing model selection and fine-tuning smaller models to match larger model performance reduces inference costs by 50-80% without sacrificing output quality. We optimise the performance-cost trade-off for your specific usage patterns and budget.

04 Data Privacy & Sovereignty

Self-hosted and private cloud deployment options ensure sensitive business data never leaves your infrastructure. Our on-premise and private cloud LLM deployments meet Malta and EU data sovereignty requirements for the most security-conscious organisations.

Our Large Language Model (LLM) Development Process

We evaluate your LLM use cases, data assets, accuracy requirements, and deployment constraints. This includes assessing data quality, identifying domain-specific terminology, and establishing performance baselines against generic models.

Based on assessment findings, we select foundation models, define fine-tuning strategies, design RAG architectures, and plan evaluation frameworks. We balance performance targets against cost constraints and deployment requirements.

Technical architecture design covers model selection rationale, fine-tuning pipeline specifications, RAG retrieval and chunking strategies, embedding model selection, vector database configuration, and inference infrastructure planning.

We prepare training datasets, execute fine-tuning runs with hyperparameter optimisation, build RAG pipelines with document processing and embedding, and develop prompt libraries tested against your specific use cases.

Comprehensive evaluation using domain-specific test sets, adversarial prompts, factual accuracy benchmarks, and bias assessments. We validate RAG retrieval quality, citation accuracy, and edge case handling before production deployment.

Production deployment with optimised inference pipelines, caching strategies, load balancing, and monitoring. We integrate LLM capabilities into your applications via well-designed APIs with proper authentication and rate limiting.

Ongoing monitoring of model accuracy, response quality, latency, and costs. We implement feedback loops for continuous improvement, manage model updates, and expand capabilities based on emerging use cases and evolving foundation models.

01

Discovery & Assessment

Step 1 of 7

Proven Results

Ligi.ai — LLM-Powered Legal Research

Neural AI built Ligi.ai, a custom AI legal assistant for Maltese law firms that combines retrieval-augmented generation with deep knowledge of Maltese legislation. The system assists lawyers with document drafting, legal research across case law, and document review, reducing research time by over 70%.

Reforms Summarisation — LLM Document Analysis

mySocialSecurity — LLM-Powered Citizen Services

Neural AI created an intelligent bilingual chatbot connecting to Malta social security systems, providing citizens with 24/7 Q&A guidance about their benefits, entitlements, and application processes in both English and Maltese.

Powered by Neural AI Products

Our proprietary AI product suite that accelerates delivery and reduces cost.

NeuroRAG →

Grounds every response in your actual business data through retrieval-augmented generation, connecting to your knowledge base and documentation to ensure accurate, hallucination-free outputs.

NeuroAgentic →

Enables AI systems to go beyond answering questions to actually executing tasks, such as booking appointments, processing refunds, updating records, and triggering workflows across your systems.

NeuroSummarisation →

Automatically condenses long documents, meeting transcripts, and research papers into concise, accurate summaries tailored to your specific needs.

Our Generative AI & Chatbots Tech Stack

Foundation Models

Fine-Tuning

RAG

Vector DBs

Evaluation

Cloud

Languages

Flexible Engagement Models

Choose the engagement model that best fits your organisation's needs and goals.

Project-Based

Clearly scoped AI projects with defined deliverables, timelines, and budgets. Ideal for proof-of-concepts, MVPs, or specific AI implementations.

Team Extension

Augment your existing team with our AI specialists. We integrate seamlessly into your workflows, tools, and culture to accelerate delivery.

Dedicated AI Team

A full AI team embedded in your organisation, working exclusively on your projects with deep domain knowledge and consistent delivery.

Ready to Discuss Your Large Language Model (LLM) Development Project?

Book a free consultation with our Malta-based AI team and discover how we can help.

Book a Free AI Consultation →Investment & Timeline

Transparent ballpark pricing to help you plan your project. Final costs depend on scope, integrations, and complexity.

Starter

- Single-channel chatbot deployment

- Up to 50 intents / conversation flows

- Integration with 1 existing system (CRM, helpdesk, etc.)

- Basic analytics dashboard

- Handoff to human agent

- 30-day post-launch support

Growth

- Multi-channel deployment (web, WhatsApp, Messenger)

- RAG-powered knowledge base integration

- Up to 150 intents + dynamic responses

- Integration with 2–3 existing systems

- Custom analytics & reporting

- A/B testing & continuous optimisation

- 90-day post-launch support

Enterprise

- Full agentic AI with task execution

- Unlimited channels & integrations

- Fine-tuned or custom LLM deployment

- Advanced compliance & data residency controls

- Dedicated AI engineer during rollout

- Ongoing retainer & SLA agreement

All estimates are project-specific. Book a discovery call for a tailored quote. Prices shown are indicative ranges for Malta market engagements.

Common Scenarios We Work On

Real situations our clients bring to us — if any of these sound familiar, we can help.

Head of IT, professional services firm

"We have 10 years of internal documents, policies, and project notes that staff can't find or search easily — we want an AI that knows everything in our knowledge base"

We build a RAG-based internal knowledge assistant that indexes all your documents, answers staff questions in plain English, and cites the source document — deployed as a private internal tool.

CTO, legal technology company

"We want to build a legal research assistant that can search case law, summarise relevant cases, and draft initial arguments — but it must cite sources and not hallucinate"

We build a grounded legal AI using RAG over verified legal databases, with mandatory source citations, confidence indicators, and a human review step before any output leaves the system.

Head of Compliance, financial institution

"Our compliance team spends hours searching regulation documents to answer internal queries — we want an AI assistant that can answer regulatory questions instantly with the right source cited"

We build a compliance AI assistant indexed on your regulatory document library, providing instant answers to compliance queries with the exact paragraph cited — reducing research time from hours to seconds.

Founder, SaaS startup

"I want to add an AI assistant to my product so users can ask questions about their own data — something like "what were my top performing campaigns last month?""

We build a natural language query layer over your product data, letting users ask questions in plain language and receive structured answers with charts — deployed as an embedded widget in your app.

Why Clients Trust Neural AI

AI projects delivered across Malta and Europe

Malta-based team, EU data residency & GDPR compliance

End-to-end delivery from strategy to production

Ongoing support & maintenance included post-launch

Large Language Model (LLM) Development FAQ

What is LLM development?

LLM development encompasses the process of customising, fine-tuning, and deploying large language models for specific business applications. At Neural AI in Malta, this includes selecting the right foundation model, fine-tuning it on your domain data, building RAG systems for factual grounding, engineering effective prompts, and deploying optimised inference pipelines that balance performance with cost.

How can LLM development benefit my business?

Custom LLM development transforms how your organisation handles language-intensive tasks. Malta businesses use our LLM solutions for automated document processing, intelligent search, content generation, code assistance, customer support, and decision support. Fine-tuned models deliver dramatically better results than generic models for your specific domain, while RAG systems ensure factual accuracy.

What industries benefit from LLM development in Malta?

LLM development delivers strong results across Malta's key industries. iGaming operators use custom LLMs for player communication and compliance documentation. Financial services firms deploy them for regulatory reporting and risk analysis. Legal firms leverage LLMs for contract analysis and research. Healthcare organisations use them for clinical documentation and patient communication. Government agencies implement LLMs for citizen services.

How does Neural AI approach LLM development?

We take a pragmatic approach that matches the right model and technique to each use case. Not every application needs fine-tuning, and not every deployment needs the largest model. We evaluate whether prompt engineering, RAG, fine-tuning, or a combination delivers the best results for your requirements, always optimising the balance between quality, cost, and latency.

What technologies do you use for LLM development?

We work with leading foundation models including GPT-4, Claude, Gemini, Llama, and Mistral. Our fine-tuning infrastructure uses Hugging Face, LoRA/QLoRA techniques, and distributed training on GPU clusters. RAG implementations leverage LangChain, LlamaIndex, and vector databases including Pinecone, Weaviate, and Qdrant. We deploy on AWS, Azure, and Google Cloud.

How long does an LLM development project take?

Timeline depends on the approach. RAG implementations with prompt engineering typically take three to six weeks. Fine-tuning projects require four to eight weeks including data preparation. Complex multi-model architectures with custom evaluation frameworks may take eight to twelve weeks from discovery to production deployment.

Do you provide ongoing support after LLM deployment?

Yes, LLM solutions require ongoing management as foundation models evolve and your data changes. Our support includes model performance monitoring, RAG knowledge base updates, prompt optimisation, cost management, and migration planning when new model versions offer improved capabilities.

How do I get started with LLM development in Malta?

Book a free consultation to discuss your LLM requirements. We will assess your use cases, evaluate your data assets, and recommend the optimal approach. Our discovery process identifies quick wins that demonstrate value immediately while building toward a comprehensive LLM strategy.

Explore More AI Solutions

AI Chatbot Development

AI chatbot solutions powered by custom LLM implementations.

Explore →AI Agent Development

Autonomous agents built on LLM reasoning capabilities.

Explore →ChatGPT Development

OpenAI GPT-specific development and integration services.

Explore →Gemini Development

Google Gemini development and multimodal AI integration.

Explore →Document AI Development

Intelligent document processing powered by LLM understanding.

Explore →Related Articles

The Complete Guide to AI Chatbot Development in Malta

Everything you need to know about building AI-powered chatbots for Maltese businesses, from planning and NLP integration to deployment and optimisation.

Read article →

Building a Multilingual AI Chatbot with Maltese Language Support

How to build AI chatbots that understand and respond in Maltese alongside English, addressing the unique NLP challenges of Malta's bilingual business environment.

Read article →

AI Chatbots vs Rule-Based Chatbots: What Malta Businesses Need to Know

A practical comparison of AI-powered and rule-based chatbots for Malta businesses, covering capabilities, costs, use cases, and when to choose which approach.

Read article →Start Your AI Journey

Contact Us

Reach out through our form or book a call to discuss your AI needs.

Get a Consultation

Our AI experts analyse your requirements and identify the best approach.

Receive a Proposal

We deliver a detailed proposal with timeline, deliverables, and investment.

Project Kickoff

We assemble your team and begin building your AI solution.

Contact Us

Reach out through our form or book a call to discuss your AI needs.

Get a Consultation

Our AI experts analyse your requirements and identify the best approach.

Receive a Proposal

We deliver a detailed proposal with timeline, deliverables, and investment.

Project Kickoff

We assemble your team and begin building your AI solution.

Ready to Get Started?

Book a free AI consultation with our Malta-based team and discover how we can transform your business with intelligent solutions.