NeuroRAG

Ground AI responses in your data with retrieval-augmented generation

Retrieval-augmented generation platform that powers intelligent chatbots and knowledge systems by grounding LLM responses in your actual data.

Trusted By Leading Organisations

NeuroRAG is the backbone of Neural AI’s conversational AI products and one of the most widely deployed components in the NeuroStack platform. It combines large language models with intelligent retrieval systems that search your proprietary data — documents, databases, websites, and knowledge bases — to generate responses that are accurate, cited, and grounded in fact rather than hallucination. Every major chatbot deployment and RAG solution we build is powered by NeuroRAG at its core.

How It Works

When a user asks a question, NeuroRAG first searches your indexed knowledge base using semantic similarity, keyword matching, and contextual re-ranking. The most relevant passages are then provided as context to the language model via NeuroIntelligence, which generates a natural-language answer with references to the source material. This approach ensures responses reflect your actual data rather than the model’s general training. For Maltese-language queries, NeuroMaltese handles bilingual understanding and response generation seamlessly.

Enterprise Knowledge Bases

NeuroRAG ingests documents in any format — PDFs, Word files, web pages, spreadsheets, database records — and builds a searchable vector index. NeuroDocument handles OCR for scanned materials, while NeuroScraper collects content from web sources automatically. Incremental updates keep the index current as your knowledge base evolves, with no downtime or full re-indexing required. The system scales from small knowledge bases of a few hundred documents to enterprise collections with millions of records.

Accuracy and Trust

Every NeuroRAG response includes source citations, confidence scores, and the ability to trace answers back to specific documents. For regulated industries such as financial services and government, this audit trail is essential for compliance and accountability. NeuroSummarisation can condense lengthy source passages into digestible summaries while maintaining citation integrity.

Proven at Scale

NeuroRAG powers chatbots for Ligi.ai’s legal research platform, the mySocialSecurity government portal, Browns supermarket customer service, and Climate Action’s environmental advisory tool. Each deployment handles thousands of queries daily with consistently high accuracy. When deployed with NeuroAgentic, NeuroRAG-powered systems can go beyond answering questions to actively executing tasks on behalf of users, creating truly autonomous AI assistants.

Multi-Channel Deployment

Through NeuroMessaging, NeuroRAG chatbots deploy across WhatsApp, Facebook Messenger, email, and web chat simultaneously. Through NeuroWeb, they integrate directly into WordPress, Shopify, and custom CMS platforms. This flexibility means your knowledge base is accessible wherever your users are, with consistent quality across every channel.

Deploy NeuroRAG in Your Organisation

Neural AI's NeuroRAG accelerates delivery, reduces cost, and integrates seamlessly with your existing systems. Let's discuss how it fits your workflow.

Schedule a Consultation →Cost Reduction

Availability

Response Time

Scale Capacity

Key Features

Semantic Search & Retrieval

NeuroRAG uses dense vector embeddings combined with sparse keyword matching and contextual re-ranking to find the most relevant passages from your knowledge base. The hybrid retrieval approach ensures both precise keyword matches and semantically similar content are surfaced, delivering comprehensive results even when users phrase questions in unexpected ways.

Multi-Source Knowledge Ingestion

Ingest documents from any format including PDFs, Word files, web pages, spreadsheets, database records, and API endpoints. NeuroRAG builds a unified searchable vector index across all sources with incremental updates that keep the index current as your knowledge base evolves, requiring no downtime or full re-indexing.

Source Citation & Audit Trail

Every response includes source citations with document references, page numbers, and confidence scores. Users can trace any answer back to the specific document and passage that informed it. For regulated industries like financial services and government, this complete audit trail meets compliance and accountability requirements.

Hallucination Prevention

NeuroRAG implements multiple guardrails against hallucination including retrieval confidence thresholds, answer grounding verification, and explicit uncertainty flagging when the knowledge base lacks sufficient information. The system refuses to speculate rather than generating plausible but incorrect answers, ensuring users can trust every response.

How NeuroRAG Works

Your documents are processed, cleaned, and split into semantically meaningful chunks using intelligent boundary detection. Each chunk preserves context including document metadata, section headers, and surrounding content for accurate retrieval.

Chunks are converted to dense vector embeddings using state-of-the-art embedding models. These vectors are stored in a high-performance vector database alongside the original text and metadata, creating a searchable knowledge index.

When a user asks a question, the query is embedded and matched against the knowledge index using hybrid search combining semantic similarity, keyword matching, and contextual re-ranking to find the most relevant passages.

Retrieved passages are provided as context to a large language model, which generates a natural-language answer grounded in the source material. The response includes citations and confidence indicators so users can verify accuracy.

New documents are incrementally indexed without rebuilding the entire knowledge base. Usage analytics identify knowledge gaps, and feedback loops improve retrieval quality over time through re-ranking model fine-tuning.

01

Document Ingestion & Chunking

Step 1 of 5

Use Cases

Build customer-facing chatbots that answer questions from your knowledge base accurately

Create internal knowledge assistants for employee onboarding and policy lookup

Power legal research tools that cite specific clauses and precedents

Enable government services chatbots that reference actual legislation and procedures

Deploy KYC onboarding assistants that guide users through compliance requirements

Industry Applications

See how this solution transforms operations across different sectors.

- • Powers legal research tools that search through legislation, case law, and regulatory guidance, enabling lawyers to find relevant precedents and draft documents faster with AI-cited references

- • Enables citizen-facing chatbots that accurately answer questions about government services, benefits, and procedures by referencing actual legislation and official documentation

- • Builds clinical knowledge assistants that reference medical guidelines, drug interactions, and treatment protocols, supporting healthcare professionals with evidence-based information retrieval

- • Powers compliance assistants and customer service tools that ground responses in actual regulatory requirements, product documentation, and policy details for financial institutions

- • Predictive models for player behaviour analysis, fraud detection, and personalised gaming experiences powered by machine learning

- • Machine learning models that detect suspicious transaction patterns and automate regulatory reporting workflows

- • Property valuation models, market trend prediction, and tenant risk assessment using AI and historical data

- • Demand forecasting, dynamic pricing, and personalised guest experience systems for hotels and tourism operators

- • Customer segmentation, demand forecasting, and inventory optimisation powered by machine learning algorithms

- • Adaptive learning platforms, student performance prediction, and curriculum optimisation through AI analysis

- • Network optimisation, churn prediction, and usage pattern analysis for telecoms operators

- • Predictive maintenance, quality control automation, and production line optimisation using AI

- • Claims prediction, risk assessment automation, and fraud detection models for insurance providers

- • Generative design optimisation, structural analysis, and project cost estimation using AI

- • Rapid ML prototyping and model development that gives startups a data-driven competitive advantage

- • Route optimisation, demand forecasting, and warehouse automation powered by machine learning

- • Threat detection, anomaly identification, and security incident prediction using AI models

Proven Results

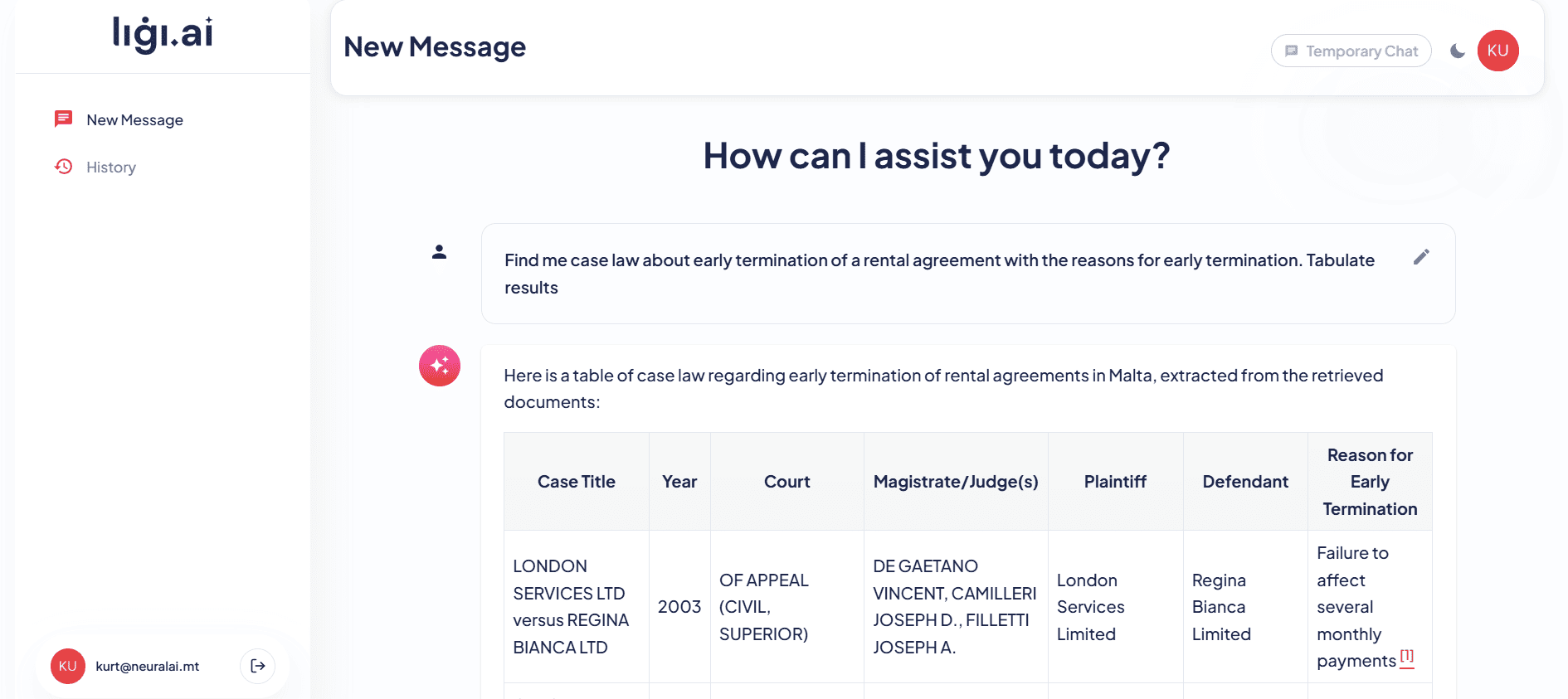

Ligi.ai - AI Legal Research Platform

Neural AI built Ligi.ai, a custom AI legal assistant for Maltese law firms that combines retrieval-augmented generation with deep knowledge of Maltese legislation. The system assists lawyers with document drafting, legal research across case law, and document review, reducing research time by over 70%.

mySocialSecurity - Government Chatbot

Neural AI created an intelligent bilingual chatbot connecting to Malta social security systems, providing citizens with 24/7 Q&A guidance about their benefits, entitlements, and application processes in both English and Maltese.

Climate Action - Environmental Advisory

Neural AI developed a publicly available chatbot for climate-related funding schemes in Malta, helping citizens and businesses discover and apply for environmental grants and sustainability programmes.

Our AI and Machine Learning Tech Stack

Technologies

Solutions Powered by NeuroRAG

Our Build AI services that leverage NeuroRAG to deliver end-to-end solutions.

NeuroRAG FAQ

What types of documents can NeuroRAG ingest?

NeuroRAG ingests virtually any document format including PDFs, Word documents, Excel spreadsheets, PowerPoint presentations, web pages, plain text, Markdown, HTML, database records, and API responses. It also handles scanned documents through integration with NeuroDocument's OCR capabilities.

How does NeuroRAG prevent AI hallucinations?

NeuroRAG uses multiple safeguards: retrieval confidence scoring ensures only high-relevance passages reach the LLM, grounding verification checks that generated answers are supported by retrieved content, and the system explicitly flags uncertainty when the knowledge base lacks sufficient information rather than generating speculative answers.

Can NeuroRAG handle Maltese language content?

Yes, through integration with NeuroMaltese, NeuroRAG fully supports Maltese language documents and queries. It handles bilingual content and Maltese-English code-switching, making it ideal for Malta's bilingual business and government environments.

How quickly can NeuroRAG be deployed?

A basic NeuroRAG deployment with your existing knowledge base can be operational within 2-4 weeks. More complex deployments with custom integrations, multi-source ingestion, and advanced retrieval tuning typically take 4-8 weeks.

Does NeuroRAG support real-time knowledge updates?

Yes, NeuroRAG supports incremental indexing that adds new or updated documents to the knowledge base without downtime. Changes are typically searchable within minutes of ingestion, ensuring your AI assistant always has access to the latest information.

What LLM models does NeuroRAG support?

NeuroRAG works with multiple LLM providers through NeuroIntelligence, including OpenAI GPT-4, Anthropic Claude, Google Gemini, and open-source models. The system can route queries to different models based on complexity and cost requirements.

How does NeuroRAG handle sensitive or confidential data?

NeuroRAG can be deployed in your private cloud or on-premises infrastructure, ensuring sensitive data never leaves your environment. Role-based access controls, encryption at rest and in transit, and comprehensive audit logging meet enterprise security requirements.

What is the cost structure for NeuroRAG?

NeuroRAG pricing is based on the volume of indexed content, query volume, and the LLM models used. Contact our team for a detailed quote tailored to your specific requirements and scale.

Start Your AI Journey

Contact Us

Reach out through our form or book a call to discuss your AI needs.

Get a Consultation

Our AI experts analyse your requirements and identify the best approach.

Receive a Proposal

We deliver a detailed proposal with timeline, deliverables, and investment.

Project Kickoff

We assemble your team and begin building your AI solution.

Contact Us

Reach out through our form or book a call to discuss your AI needs.

Get a Consultation

Our AI experts analyse your requirements and identify the best approach.

Receive a Proposal

We deliver a detailed proposal with timeline, deliverables, and investment.

Project Kickoff

We assemble your team and begin building your AI solution.

Ready to Deploy NeuroRAG?

Book a free consultation with our team to discuss how NeuroRAG can be integrated into your business workflows.