AI Data Engineering Malta

AI data engineering in Malta. Build the data infrastructure that AI and machine learning models need: feature stores, training pipelines, ML data ops, and model serving for Maltese businesses.

Schedule a Consultation →Trusted By Leading Organisations

AI and machine learning projects succeed or fail based on data quality and accessibility, yet most organisations underinvest in the data engineering that underpins their AI ambitions. Neural AI in Malta specialises in building the data infrastructure specifically designed for machine learning: feature stores, training data pipelines, labelling workflows, and data quality systems that make AI development reliable and efficient.

The Data Foundation for Successful AI

The most common reason AI projects fail is not algorithm selection or model architecture but poor data quality, inconsistent feature computation, and fragile data pipelines. Our AI data engineering services address the unique requirements of ML systems that general-purpose data engineering does not cover. Feature stores provide consistent feature computation across training and serving. Versioned datasets ensure experimental reproducibility. Data quality monitoring catches distribution drift before it degrades model performance.

Malta businesses investing in AI capabilities benefit enormously from purpose-built data infrastructure. Our feature stores and training data pipelines accelerate model development cycles, reduce data-related production incidents, and enable multiple data science teams to share and reuse curated data assets across projects.

Feature Store Architecture

Feature stores are the critical bridge between data engineering and machine learning. Without a centralised feature store, data scientists compute features differently for training versus production, introducing training-serving skew that silently degrades model accuracy. Our feature store implementations on Feast, Databricks Feature Store, or AWS SageMaker provide versioned feature definitions, batch and real-time serving, and lineage tracking.

For Malta iGaming companies building player personalisation models, feature stores serve real-time player behaviour signals alongside historical aggregations. Financial institutions use feature stores to ensure credit scoring and AML models score production transactions with exactly the same feature computations used during model training.

Training Data Pipeline Engineering

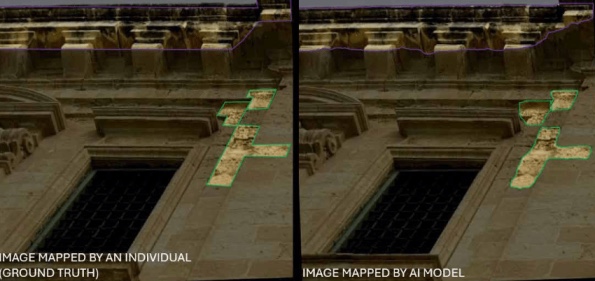

Training data quality directly determines model accuracy. Our automated pipelines prepare, validate, version, and deliver training datasets with reproducible processes. Data augmentation, balanced sampling, and train-test splitting follow best practices for each data type, whether tabular, image, text, or time-series. The LiMap project demonstrates our training pipeline capability, processing 10,000+ annotated images for deterioration detection model training.

Every training dataset is versioned and linked to the pipeline configuration that produced it. When a model produces unexpected results, your team can trace back to the exact dataset, feature computations, and preprocessing steps involved. This traceability is essential for regulated industries in Malta where model decisions must be auditable and explainable.

Transform Your Business with Custom AI Solutions

Neural AI's ai data engineering solutions streamline processes and automate tasks, delivering measurable ROI for organisations in Malta and beyond. Let's discuss your project.

Schedule a Consultation →Cost Reduction

Availability

Response Time

Scale Capacity

Industry Applications

See how this solution transforms operations across different sectors.

- • Build feature stores that serve real-time player behaviour features for personalisation, risk scoring, and responsible gaming models

- • Training data pipelines curate player interaction data with proper privacy controls for Malta-licensed operators building ML-powered player experiences

- • Develop ML data infrastructure for credit scoring, fraud detection, and AML models with regulatory-grade data lineage and versioning

- • Feature stores ensure consistent risk scoring between model development and production serving across Malta financial institutions

- • Build GDPR-compliant training data pipelines for medical imaging, clinical prediction, and drug discovery ML models

- • Anonymisation, consent tracking, and access controls ensure patient data is handled appropriately throughout the ML development lifecycle

- • Create sensor data pipelines and feature stores for predictive maintenance, quality prediction, and process optimisation models

- • Real-time feature serving enables ML models to score equipment health and product quality using live IoT sensor data from Malta manufacturing facilities

- • Leverage Data Engineering solutions to transform operations, reduce costs, and drive innovation in the Government & Public Sector sector

- • Leverage Data Engineering solutions to transform operations, reduce costs, and drive innovation in the AML & Compliance sector

- • Leverage Data Engineering solutions to transform operations, reduce costs, and drive innovation in the Real Estate sector

- • Leverage Data Engineering solutions to transform operations, reduce costs, and drive innovation in the Hospitality & Tourism sector

- • Leverage Data Engineering solutions to transform operations, reduce costs, and drive innovation in the Retail sector

- • Leverage Data Engineering solutions to transform operations, reduce costs, and drive innovation in the Education sector

- • Leverage Data Engineering solutions to transform operations, reduce costs, and drive innovation in the Telecommunications sector

- • Leverage Data Engineering solutions to transform operations, reduce costs, and drive innovation in the Insurance sector

- • Leverage Data Engineering solutions to transform operations, reduce costs, and drive innovation in the Architecture sector

- • Leverage Data Engineering solutions to transform operations, reduce costs, and drive innovation in the Startup sector

- • Leverage Data Engineering solutions to transform operations, reduce costs, and drive innovation in the Logistics & Supply Chain sector

- • Leverage Data Engineering solutions to transform operations, reduce costs, and drive innovation in the Legal sector

- • Leverage Data Engineering solutions to transform operations, reduce costs, and drive innovation in the Information Technology & Security sector

Key Features

Feature Store Development

Centralised feature stores that serve consistent, pre-computed features to both model training and real-time inference. Eliminate feature computation duplication and training-serving skew that causes production ML failures. Support both batch and real-time feature serving with versioned feature definitions and lineage tracking.

Training Data Pipelines

Automated pipelines that prepare, validate, version, and deliver training datasets for machine learning workflows. Handle labelling orchestration, data augmentation, balanced sampling, and train-test splitting with reproducible processes that ensure every model training run uses traceable, quality-controlled data.

Data Labelling & Annotation

Managed data labelling workflows combining human annotators with semi-automated labelling tools for images, text, audio, and structured data. Quality-controlled annotation with inter-annotator agreement tracking, active learning prioritisation, and review cycles that produce training data meeting production accuracy requirements.

ML Data Quality Monitoring

Continuous monitoring of data distributions, feature drift, label quality, and data freshness metrics that affect model performance. Statistical tests detect distributional shifts in production data that would degrade model accuracy, triggering retraining alerts before prediction quality deteriorates noticeably.

Benefits

Discover how our ai data engineering services deliver measurable results for your organisation.

01 Accelerate ML Development Cycles

Pre-built data infrastructure reduces the time data scientists spend on data preparation from 80% to 20% of their working hours. Malta AI teams focus on modelling and experimentation rather than data wrangling, shortening model development cycles from months to weeks.

02 Reproducible ML Experiments

Versioned datasets, feature definitions, and pipeline configurations ensure ML experiments are fully reproducible. Track exactly which data version, feature computations, and preprocessing steps produced which model results across your entire model development history.

03 Production-Ready Data Infrastructure

Data engineered for ML production handles edge cases, missing values, schema changes, and distribution shifts that cause models to fail silently in deployment. Malta businesses avoid the common pattern of models that work in notebooks but break in production.

04 Cross-Team Feature Sharing

Feature stores and catalogued datasets enable different ML teams to discover, share, and reuse data assets. Prevent duplicated feature engineering effort and ensure consistent feature definitions across all models, reducing engineering overhead by 40-60%.

Our AI Data Engineering Process

We work with your data science team to document feature requirements, data freshness needs, serving latency targets, and quality standards for each ML use case. This analysis shapes the data infrastructure architecture.

We design and build centralised feature stores with batch and real-time serving capabilities. Feature definitions, computation logic, and serving configurations are version-controlled and documented for team-wide reuse.

We build automated training data pipelines that produce versioned, validated datasets on schedule. Data augmentation, sampling strategies, and quality checks ensure training data meets model requirements consistently.

We configure labelling platforms, define annotation guidelines, implement quality controls, and establish review processes. Active learning integration prioritises the most informative samples for annotation to maximise labelling efficiency.

We deploy statistical monitoring that continuously compares production data distributions against training data baselines. Drift detection algorithms identify feature distribution changes, data quality degradation, and concept drift.

We integrate data infrastructure with your ML platform including experiment tracking, model registry, and deployment pipelines. End-to-end MLOps ensures seamless flow from data preparation through model training to production serving.

01

ML Data Requirements Analysis

Step 1 of 6

Proven Results

LiMap Site Deterioration Detection

We developed a custom computer vision model for AP Valletta that detects deterioration patterns including cracks, erosion, and staining from standard site photographs. The AI automatically maps detected damage onto AutoCAD drawings, reducing manual processing time by over 80%.

Tipico AML

We migrated Tipico's AML data science workflows from KNIME to Python-based big data analytics with AWS Airflow automation, achieving up to 70% faster ETL pipeline execution and improved risk-ranking accuracy.

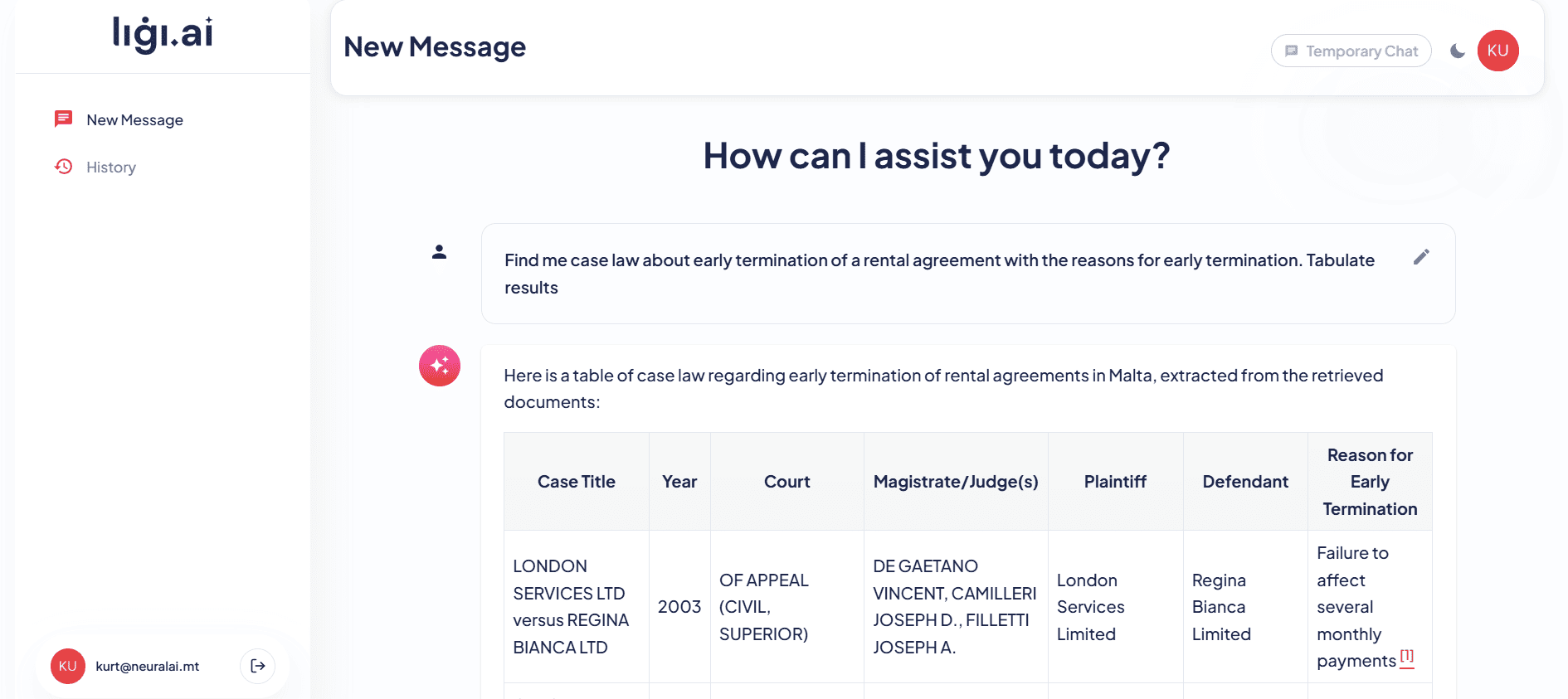

Ligi.ai Legal Sector

Neural AI built Ligi.ai, a custom AI legal assistant for Maltese law firms that combines retrieval-augmented generation with deep knowledge of Maltese legislation. The system assists lawyers with document drafting, legal research across case law, and document review, reducing research time by over 70%.

Powered by Neural AI Products

Our proprietary AI product suite that accelerates delivery and reduces cost.

NeuroRAG →

Grounds every response in your actual business data through retrieval-augmented generation, connecting to your knowledge base and documentation to ensure accurate, hallucination-free outputs.

NeuroCV →

Computer vision engine that analyses images and video in real-time, powering visual inspection, object detection, facial recognition, and document scanning capabilities.

NeuroAgentic →

Enables AI systems to go beyond answering questions to actually executing tasks, such as booking appointments, processing refunds, updating records, and triggering workflows across your systems.

NeuroIntelligence →

Business intelligence layer that transforms raw data into actionable insights through automated analysis, anomaly detection, and predictive modelling.

Our Data Engineering Tech Stack

Technologies

Flexible Engagement Models

Choose the engagement model that best fits your organisation's needs and goals.

Project-Based

Clearly scoped AI projects with defined deliverables, timelines, and budgets. Ideal for proof-of-concepts, MVPs, or specific AI implementations.

Team Extension

Augment your existing team with our AI specialists. We integrate seamlessly into your workflows, tools, and culture to accelerate delivery.

Dedicated AI Team

A full AI team embedded in your organisation, working exclusively on your projects with deep domain knowledge and consistent delivery.

Ready to Discuss Your AI Data Engineering Project?

Book a free consultation with our Malta-based AI team and discover how we can help.

Book a Free AI Consultation →Investment & Timeline

Transparent ballpark pricing to help you plan your project. Final costs depend on scope, integrations, and complexity.

Starter

- Data audit & architecture review

- Single data pipeline build

- Source → destination integration (2 systems)

- Basic data quality checks

- Documentation & handover

- 30-day post-launch support

Growth

- Multi-source data ingestion (up to 6 sources)

- Data warehouse or lake setup

- Transformation layer (dbt or equivalent)

- Orchestration (Airflow / Prefect)

- Data quality monitoring & alerting

- BI-ready data models

- 90-day post-launch support

Enterprise

- Enterprise data platform architecture

- Real-time streaming (Kafka / Flink)

- Data governance & lineage tracking

- Cost optimisation for cloud data warehouse

- Team training & documentation

- Ongoing retainer option available

All estimates are project-specific. Book a discovery call for a tailored quote. Prices shown are indicative ranges for Malta market engagements.

Common Scenarios We Work On

Real situations our clients bring to us — if any of these sound familiar, we can help.

Head of Data, retail group

"Our sales data lives in three different systems — Shopify, our ERP, and a warehouse management tool — and we can't get a single view of inventory performance"

We build a unified data pipeline that ingests from all three sources, applies consistent business logic, and loads into a data warehouse your BI team can query in real time.

CTO, fintech startup

"We process 50,000 transactions per day and our analytics queries take 20 minutes to run — we need a proper data infrastructure that scales"

We architect a streaming-capable data platform using Kafka for ingestion and a columnar data warehouse (BigQuery/Snowflake/Redshift), reducing your query times to seconds.

Data Analyst, insurance company

"Our data pipelines keep breaking every time the source system updates its schema — we spend more time fixing pipelines than doing actual analysis"

We rebuild your pipelines with schema evolution handling, automated data quality checks, and alerting so failures are caught and self-healed before they impact your analysts.

Operations Director, logistics company

"We want to use AI and ML for route optimisation but our data is scattered, inconsistent, and in five different formats — we've been told our data isn't ready for AI"

We perform a data readiness assessment and build the clean, structured data foundation your ML models need — standardising formats, filling gaps, and creating the feature store for your AI project.

Why Clients Trust Neural AI

AI projects delivered across Malta and Europe

Malta-based team, EU data residency & GDPR compliance

End-to-end delivery from strategy to production

Ongoing support & maintenance included post-launch

AI Data Engineering FAQ

What is a feature store and why do we need one?

A feature store is a centralised repository for ML features that serves consistent data to both training and inference. Without one, teams often compute features differently for training versus production, causing training-serving skew that degrades model accuracy. Feature stores also enable feature sharing across teams, reducing duplicated engineering work.

How does AI data engineering differ from regular data engineering?

AI data engineering addresses ML-specific requirements that general data engineering does not cover: feature computation and serving, training data versioning and validation, data drift monitoring, labelling workflows, and the need for consistent data between training and inference environments. It is a specialised layer built on top of general data infrastructure.

What tools do you use for feature stores?

We work with Feast for open-source feature stores, Databricks Feature Store for Databricks-centric environments, AWS SageMaker Feature Store for AWS deployments, and custom feature store implementations when specific requirements warrant it. Tool selection depends on your ML platform, latency requirements, and existing infrastructure.

How do you handle data labelling quality?

We implement multi-annotator labelling with inter-annotator agreement measurement, review cycles for disagreements, and quality audits on random samples. Active learning identifies the most informative samples for annotation. Labelling guidelines are documented and iterated based on edge cases discovered during annotation.

What is data drift and how do you detect it?

Data drift occurs when the statistical distribution of production data changes from what the model was trained on, causing accuracy degradation. We monitor feature distributions using statistical tests like Kolmogorov-Smirnov, Population Stability Index, and Jensen-Shannon divergence, alerting your team when drift exceeds configured thresholds.

Can you work with our existing ML platform?

Yes, we integrate with popular ML platforms including Databricks MLflow, AWS SageMaker, Azure ML, Google Vertex AI, and open-source tools like Kubeflow and MLflow. Our data infrastructure feeds into your existing model training and serving pipelines without requiring platform changes.

How do you version training datasets?

We use DVC, Delta Lake versioning, or cloud-native dataset versioning to create immutable snapshots of training data. Each model training run references a specific dataset version, enabling full reproducibility. Version metadata includes data lineage, quality metrics, and the pipeline configuration that produced the dataset.

What about unstructured data like images and text?

Our pipelines handle unstructured data including image preprocessing, text tokenisation, embedding generation, and multimodal data alignment. We build specialised pipelines for computer vision training data, NLP corpora, and document processing datasets with appropriate augmentation and quality controls.

Explore More AI Solutions

Data Engineering Services

Comprehensive data engineering that provides the foundational infrastructure on which AI data engineering capabilities are built.

Explore →Machine Learning Development

Custom ML model development that leverages the feature stores and training pipelines built by our AI data engineering team.

Explore →Data Pipeline Development

Production-grade pipeline engineering that feeds training data, features, and inference inputs to ML systems reliably.

Explore →Databricks Services

Databricks platform services including MLflow, Feature Store, and Unity Catalog for unified ML data management.

Explore →Related Articles

Data Engineering Best Practices for Maltese Companies

Essential data engineering practices for Maltese businesses, from pipeline architecture and data quality to cloud platforms and team structure.

Read article →

Big Data Analytics in Malta: A Comprehensive Guide

A comprehensive guide to big data analytics for Maltese businesses, covering data strategy, infrastructure, tools, and real-world applications across key industries.

Read article →

The Role of Big Data and Data Analytics in Business Growth

Learn how big data and data analytics drive business growth through better decision-making, customer insights, and operational optimisation.

Read article →Start Your AI Journey

Contact Us

Reach out through our form or book a call to discuss your AI needs.

Get a Consultation

Our AI experts analyse your requirements and identify the best approach.

Receive a Proposal

We deliver a detailed proposal with timeline, deliverables, and investment.

Project Kickoff

We assemble your team and begin building your AI solution.

Contact Us

Reach out through our form or book a call to discuss your AI needs.

Get a Consultation

Our AI experts analyse your requirements and identify the best approach.

Receive a Proposal

We deliver a detailed proposal with timeline, deliverables, and investment.

Project Kickoff

We assemble your team and begin building your AI solution.

Ready to Get Started?

Book a free AI consultation with our Malta-based team and discover how we can transform your business with intelligent solutions.