NeuroIntelligence

Add human-level reasoning to your business processes with LLM intelligence

LLM-powered logic, reasoning, and decision-making engine that brings advanced AI cognition to complex business processes and analysis.

Trusted By Leading Organisations

NeuroIntelligence is the cognitive core of the NeuroStack platform, providing large language model capabilities for reasoning, analysis, decision-making, and content generation across all Neural AI products. It abstracts the complexity of working with multiple LLM providers behind a unified interface optimised for enterprise use. As the most broadly integrated component in NeuroStack, NeuroIntelligence powers nine major client deployments spanning legal, government, financial, and educational sectors.

Multi-Model Orchestration

NeuroIntelligence routes tasks to the most appropriate LLM based on complexity, cost, speed, and capability requirements. Simple classification tasks use fast, cost-effective models, while complex reasoning chains leverage frontier models. This intelligent routing optimises both quality and cost across your AI workloads. Our LLM development expertise ensures each deployment uses the optimal model configuration for its specific domain.

Structured Reasoning

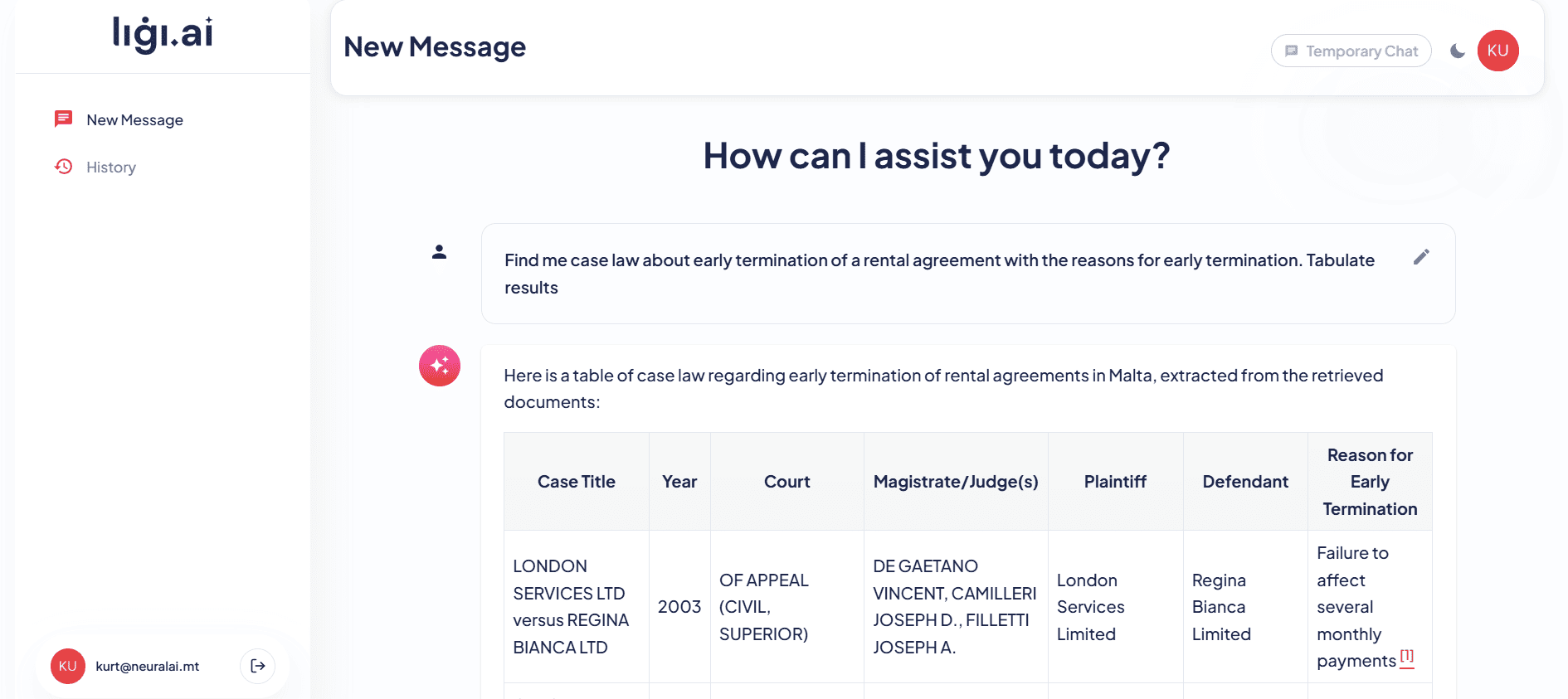

Beyond simple text generation, NeuroIntelligence implements chain-of-thought reasoning, structured output generation, and logical analysis pipelines. For Ligi.ai, it reasons through legal questions by analysing relevant statutes, precedents, and regulatory guidance retrieved by NeuroRAG. For the Grocery Price Monitor, it performs product matching analysis across retailers using NeuroCompare comparison data.

Domain Specialisation

NeuroIntelligence is tuned for specific domains through prompt engineering, fine-tuning, and knowledge augmentation. Each deployment incorporates domain expertise — legal reasoning for Ligi.ai, financial analysis for the ARB, compliance assessment for mySocialSecurity — that generic LLMs lack. Combined with NeuroMaltese for Maltese-language understanding, NeuroIntelligence delivers domain-specific reasoning in both of Malta’s languages.

Enterprise Reliability

NeuroIntelligence includes output validation, guardrails against harmful content, factual grounding through NeuroRAG integration, and comprehensive logging. Every inference is tracked with latency, token usage, cost, and quality metrics. Automatic fallback ensures continuity if any single model provider experiences downtime. NeuroAgentic builds on NeuroIntelligence to create autonomous agents, while NeuroSummarisation leverages it for document condensation. For the GPT Cloud Migration project, NeuroIntelligence powered error detection across legacy data alongside NeuroDocument and NeuroFinance. NeuroIntelligence processes tens of thousands of inference requests daily with consistent quality and sub-second response times across all deployments.

Deploy NeuroIntelligence in Your Organisation

Neural AI's NeuroIntelligence accelerates delivery, reduces cost, and integrates seamlessly with your existing systems. Let's discuss how it fits your workflow.

Schedule a Consultation →Cost Reduction

Availability

Response Time

Scale Capacity

Key Features

Multi-Model Orchestration

NeuroIntelligence routes tasks to the most appropriate LLM based on complexity, cost, speed, and capability requirements. Simple classification uses fast, cost-effective models while complex reasoning chains leverage frontier models. This intelligent routing optimises both quality and cost across AI workloads without manual model selection.

Structured Reasoning Chains

Beyond simple text generation, NeuroIntelligence implements chain-of-thought reasoning, structured output generation, and logical analysis pipelines. The system breaks complex questions into sequential reasoning steps, validates intermediate conclusions, and assembles coherent final outputs with traceable logic.

Domain Specialisation

NeuroIntelligence is tuned for specific domains through prompt engineering, fine-tuning, and knowledge augmentation. Each deployment incorporates domain expertise — legal reasoning for law firms, financial analysis for regulators, educational assessment for learning platforms — that generic LLMs lack.

Enterprise Guardrails & Reliability

Output validation, content guardrails, factual grounding through NeuroRAG integration, and comprehensive logging ensure enterprise reliability. Every inference is tracked with latency, token usage, cost, and quality metrics. Automatic failover ensures continuity when any single model provider experiences downtime.

How NeuroIntelligence Works

Incoming requests are analysed to determine the type of reasoning required — classification, analysis, generation, or multi-step reasoning — and the optimal model and configuration for the task.

Relevant context is assembled from NeuroRAG knowledge bases, document extractions from NeuroDocument, and conversation history, providing the LLM with comprehensive information for informed reasoning.

The task is executed by the selected LLM with domain-specific prompting, structured output formatting, and intermediate validation steps. For complex reasoning, chain-of-thought processing breaks the problem into manageable steps.

Generated outputs pass through validation checks for factual grounding, format compliance, safety guardrails, and quality standards. Responses that fail validation are regenerated or flagged for human review.

Usage metrics, quality scores, and user feedback continuously improve model selection, prompt engineering, and routing decisions, ensuring the system gets better over time.

01

Task Analysis

Step 1 of 5

Use Cases

Automate complex decision-making that requires contextual reasoning

Analyse unstructured data and extract actionable business insights

Power AI agents that reason through multi-step problems autonomously

Generate structured analyses from raw text, documents, and data

Build AI-powered expert systems for specialized domains

Industry Applications

See how this solution transforms operations across different sectors.

- • Powers legal reasoning engines that analyse statutes, precedents, and regulatory guidance, enabling AI-assisted legal research and document analysis with domain-specific understanding of Maltese and EU law

- • Drives financial analysis including risk assessment, regulatory compliance reasoning, and transaction pattern analysis, bringing LLM intelligence to complex financial decision-making processes

- • Enables intelligent government service systems that reason through citizen queries, policy requirements, and eligibility determinations with accuracy and transparency

- • Powers educational assessment systems that evaluate student work against rubrics, provide detailed feedback, and generate personalised learning recommendations with pedagogical reasoning

- • Predictive models for player behaviour analysis, fraud detection, and personalised gaming experiences powered by machine learning

- • Machine learning models that detect suspicious transaction patterns and automate regulatory reporting workflows

- • Property valuation models, market trend prediction, and tenant risk assessment using AI and historical data

- • Demand forecasting, dynamic pricing, and personalised guest experience systems for hotels and tourism operators

- • Customer segmentation, demand forecasting, and inventory optimisation powered by machine learning algorithms

- • Network optimisation, churn prediction, and usage pattern analysis for telecoms operators

- • Predictive maintenance, quality control automation, and production line optimisation using AI

- • Claims prediction, risk assessment automation, and fraud detection models for insurance providers

- • Clinical decision support, drug discovery acceleration, and patient outcome prediction models

- • Generative design optimisation, structural analysis, and project cost estimation using AI

- • Rapid ML prototyping and model development that gives startups a data-driven competitive advantage

- • Route optimisation, demand forecasting, and warehouse automation powered by machine learning

- • Threat detection, anomaly identification, and security incident prediction using AI models

Proven Results

Ligi.ai - Legal Reasoning Engine

Neural AI built Ligi.ai, a custom AI legal assistant for Maltese law firms that combines retrieval-augmented generation with deep knowledge of Maltese legislation. The system assists lawyers with document drafting, legal research across case law, and document review, reducing research time by over 70%.

ARB AML - Compliance Intelligence

An AML system that identifies detailed bank statement entries and flags suspicious transactions using AI-powered document analysis, automated risk scoring, and compliance reporting.

Grocery Price Monitor - Market Analysis

An AI-powered system using web scraping and GPT-based NLP for product name matching across Maltese supermarkets, enabling real-time food price comparisons with predictive modelling for pricing trends.

Our AI and Machine Learning Tech Stack

Technologies

Solutions Powered by NeuroIntelligence

Our Build AI services that leverage NeuroIntelligence to deliver end-to-end solutions.

NeuroIntelligence FAQ

Which LLM models does NeuroIntelligence support?

NeuroIntelligence supports OpenAI GPT-4 and GPT-4o, Anthropic Claude, Google Gemini, Meta Llama, Mistral, and other open-source models. New models are integrated as they become available, and the routing layer automatically incorporates them based on benchmark performance.

How does NeuroIntelligence handle sensitive data?

NeuroIntelligence can route sensitive data exclusively to on-premises or private cloud models, ensuring it never leaves your environment. For cloud-hosted models, data processing agreements and encryption protect information in transit. PII detection can automatically redact sensitive fields before sending to external APIs.

What is the latency for NeuroIntelligence responses?

Simple tasks like classification respond in under 500ms. Complex reasoning chains with multiple steps typically complete in 2-5 seconds. The routing layer optimises for latency requirements, using faster models when speed is more important than maximum quality.

Can NeuroIntelligence be fine-tuned for our domain?

Yes, we fine-tune models on your domain-specific data to improve accuracy for specialised tasks. Fine-tuning is particularly effective for classification, extraction, and domain-specific reasoning where generic models underperform.

How does the multi-model routing work?

Tasks are classified by complexity, sensitivity, and latency requirements. A routing layer selects the optimal model — using cost-effective models for simple tasks and frontier models for complex reasoning. This approach typically reduces LLM costs by 40-60% while maintaining quality.

What happens if an LLM provider has an outage?

NeuroIntelligence includes automatic failover between providers. If OpenAI is unavailable, tasks route to Anthropic or Google Gemini without interruption. Health monitoring detects provider issues within seconds and triggers failover automatically.

Start Your AI Journey

Contact Us

Reach out through our form or book a call to discuss your AI needs.

Get a Consultation

Our AI experts analyse your requirements and identify the best approach.

Receive a Proposal

We deliver a detailed proposal with timeline, deliverables, and investment.

Project Kickoff

We assemble your team and begin building your AI solution.

Contact Us

Reach out through our form or book a call to discuss your AI needs.

Get a Consultation

Our AI experts analyse your requirements and identify the best approach.

Receive a Proposal

We deliver a detailed proposal with timeline, deliverables, and investment.

Project Kickoff

We assemble your team and begin building your AI solution.

Ready to Deploy NeuroIntelligence?

Book a free consultation with our team to discuss how NeuroIntelligence can be integrated into your business workflows.